Code Reviews, the Biggest SDLC Bottleneck?

Code review, while a crucial component of the Software Development Life Cycle (SDLC), can often be perceived as a bottleneck for several reasons:

- Balanced quality and speed: Code reviews prioritize the code quality. This often requires time and attention to details, slowing down the rapid pace at which developers might be churning out code. The inherent tension between wanting to maintain high-quality standards and wanting to release features quickly can cause delays.

- Limited expertise: Not all team members may be equipped to review certain pieces of code, especially if it's in a domain or technology they're unfamiliar with. Waiting for the right expert to be available can introduce delays.

- PR size: In a busy development environment, the sheer volume of code changes can be overwhelming. Large pull requests (PRs) or merge requests are harder to review than smaller ones. It takes time to understand, evaluate, and provide meaningful feedback on large chunks of code.

- Asynchronous communication: Developers may be spread across different time zones, especially in today's remote-first work environments. Waiting for feedback from a colleague in a different time zone can introduce delays.

- Lack of clear guidelines/scoping: Without a clear set of guidelines or a checklist for code reviews, the process can become subjective. Different reviewers might focus on different aspects, leading to inconsistent feedback and prolonged back-and-forth discussions.

- Cognitive load: Reviewing code requires a high degree of concentration. A reviewer needs to understand the context, the problem the code is solving, and any potential implications of the code changes. This cognitive load can be taxing and time-consuming.

- Avoiding difficult conversations: Sometimes, reviewers might find issues with the code but might hesitate to give negative feedback, fearing conflicts or the possibility of coming across as overly critical. This can delay the review process as they might take more time to phrase their feedback or avoid it altogether.

- Dependency chains: Code awaiting review might be dependent on other code that's also under review. This creates a chain of dependencies where one delayed review can hold up several others.

- Tool limitations: While there are many tools available for code reviews, they might not always be optimized for the specific needs of a team. The wrong tool can introduce inefficiencies in the review process.

- Continuous integration (CI): Sometimes, the code review process might be waiting on automated tests to pass in a CI environment. If there's a queue or if the CI process is slow, it can add to the delay.

While code reviews can introduce delays, it's essential to remember that their primary purpose is to ensure code quality, maintainability, and knowledge transfer among team members. Addressing the above challenges through better processes, tools, training, and communication can help to optimize the code review process within the SDLC.

In this article, we’ll cover the basics of code reviews and why they are important, the code review process and best practices, the common pitfalls and how to avoid them, and we’ll provide you with an overview of the tools and platforms to help streamline code reviews.

The Basics of Code Review

Brief History of Code Review

The history of code review traces its roots back to the early days of software engineering and has evolved over time to address the changing needs and technologies in the software development field.

Early Peer Reviews

Before the term "code review" became widespread, the concept of reviewing work done by peers was already practiced in other fields. In software engineering, this approach became more formalized in the 1970s and 1980s as the industry began to recognize the importance of quality assurance.

Structured Walkthroughs

One of the earliest formalized methods of code review was the "structured walkthrough". This process involved the author of the code presenting their work to a group of colleagues. The group would then critique the code and suggest improvements.

Fagan Inspections

In 1976, Michael Fagan of IBM formalized a code inspection method known as the "Fagan Inspection". This method included roles such as the author, inspector, reader, and moderator, and it had a defined process comprising stages like planning, overview, preparation, inspection, rework, and follow-up.

While methods like Fagan Inspections were detailed and rigorous, they were also time-consuming. As software development methodologies evolved, especially with the rise of Agile and Continuous Integration/Continuous Deployment (CI/CD), there was a move toward more lightweight, iterative code review processes. Tools like pull requests in Git-based platforms (like GitHub, GitLab, and Bitbucket) facilitated this shift.

The rise of distributed version control systems, especially Git, has transformed the code review landscape. Platforms such as GitHub, Bitbucket, and GitLab introduced features that allowed developers to review and comment on code changes directly within the platform. These tools made it easier for teams, including distributed teams, to collaborate, discuss, and iterate on code changes.

Automation and Static Analysis

As technology advanced, tools were developed to automate parts of the code review process. Static code analysis tools could automatically detect certain code patterns, bugs, or potential vulnerabilities, allowing human reviewers to focus on the logic and design aspects of the code.

In conclusion, the practice of code review has evolved over the decades from formal, structured processes to more agile and tool-integrated practices. The emphasis throughout, however, has remained on improving code quality, fostering collaboration, and ensuring that software is maintainable and free of critical errors.

The Primary Goals of Code Review

The primary goal of code review is to ensure and improve the quality of software. This overarching objective can be broken down into several facets:

- Identifying and correcting bugs: One of the main reasons for code reviews is to catch errors or bugs that might have been overlooked by the original developer. By having another set of eyes (or multiple sets) review the code, the likelihood of spotting and addressing these issues increases.

- Maintaining code consistency: Code reviews help ensure that the codebase remains consistent in terms of style, structure, and design. This consistency makes the code easier to read, understand, and maintain in the long run.

- Ensuring code security: Security vulnerabilities can be introduced inadvertently. Through code reviews, developers can spot and rectify potential security issues before they become larger problems.

- Knowledge sharing and transfer: Code reviews facilitate the sharing of knowledge among team members. Developers become familiar with different parts of the codebase, learn new techniques, and understand the rationale behind certain decisions.

- Promoting best practices: Code reviews help reinforce best practices in coding, design patterns, and software architecture. They act as a platform for team members to share and discuss better ways to achieve a certain task or function.

- Building team cohesion: The collaborative nature of code reviews fosters improved communication and understanding among team members. They become more aligned in their approach to coding and problem-solving.

- Validating software design: Beyond just the code's syntax and logic, reviews can also validate the overall software design. Reviewers can provide feedback on the architecture, data flows, and other high-level design aspects.

- Documentation and comments: Ensuring that code is well-documented and that comments are meaningful is another goal of code reviews. Proper documentation aids future maintenance and provides clarity for other developers who might work on the code.

While ensuring and improving code quality stands out as the primary goal, the benefits of code reviews span various aspects of software development, from technical to interpersonal.

Why Code Reviews Are Essential

Code Review for Quality Assurance

- Error detection: A key aspect of quality assurance is the early detection and correction of errors. When multiple developers review code, the probability of identifying and rectifying mistakes, bugs, or inefficiencies increases.

- Standard enforcement: Code reviews help enforce coding standards and best practices. Consistent adherence to standards is vital to ensure that the software behaves reliably and predictably.

- Security: During the review process, vulnerabilities or potential security loopholes can be identified and addressed. Given the critical importance of software security, this facet of code reviews is vital for quality assurance.

Code Review for Knowledge Transfer

- Shared understanding: When developers review each other's code, they gain insights into different parts of the codebase and the rationale behind specific implementations. This shared understanding aids in overall system comprehension.

- Mentorship: More experienced developers can provide guidance and suggestions to less experienced team members during reviews, helping them learn and improve their skills.

- Continuous learning: Even seasoned developers can learn new techniques, approaches, or language features from their peers during the review process.

Code Review to Foster Team Collaboration

- Unified vision: By collaboratively reviewing code, team members align their understanding and vision of the project. This alignment helps in achieving project objectives more efficiently.

- Open communication: Code reviews provide a structured platform for open communication. Developers discuss, debate, and iterate on code changes, fostering a culture of constructive feedback.

- Builds trust: When team members review and learn from each other, it cultivates mutual trust and respect. This trust is essential for a healthy and productive team environment.

Code Review to Ensure Code Maintainability

- Consistency: Code reviews help maintain a consistent coding style and structure across the codebase. Consistent code is easier to read, understand, and modify.

- Detect code smells: "Code smells" are indications that certain parts of the code might be problematic in the future, even if they aren't causing immediate issues. Code reviews can help identify and address these early on.

- Documentation: Reviewers can highlight areas where comments or documentation might be lacking or unclear. Proper documentation ensures that future developers can understand and modify the code without undue difficulty.

In essence, code reviews serve as a multifaceted tool in software development. They not only ensure that the software is of high quality but also play a pivotal role in team dynamics, knowledge dissemination, and the long-term health of the software project.

The Code Review Process

The Code “Pre-Review”

Before submitting code for review, there are several preliminary checks and actions that can be taken to streamline the review process:

- Local testing: The developer should run the code locally to ensure that the changes work as expected and that no obvious bugs are introduced.

- Linting: Linters are tools that automatically analyze code to detect various issues, mostly related to style and formatting. Running a linter ensures the code conforms to the established coding standards. Examples include ESLint for JavaScript, Pylint for Python, and RuboCop for Ruby.

- Static analysis: Static code analysis tools examine code without executing it to identify potential problems, such as logical errors, unused variables, or potential vulnerabilities. Tools like SonarQube, Coverity, and Checkmarx are popular static analysis tools.

- Unit tests: If applicable, new or modified unit tests should be written to cover the changes. All tests, including existing ones, should be run to ensure nothing is broken.

- Commit message: Proper commit messages should be written to provide context about the changes, making it easier for reviewers to understand the rationale behind them.

To monitor the code pre-review process, you can leverage:

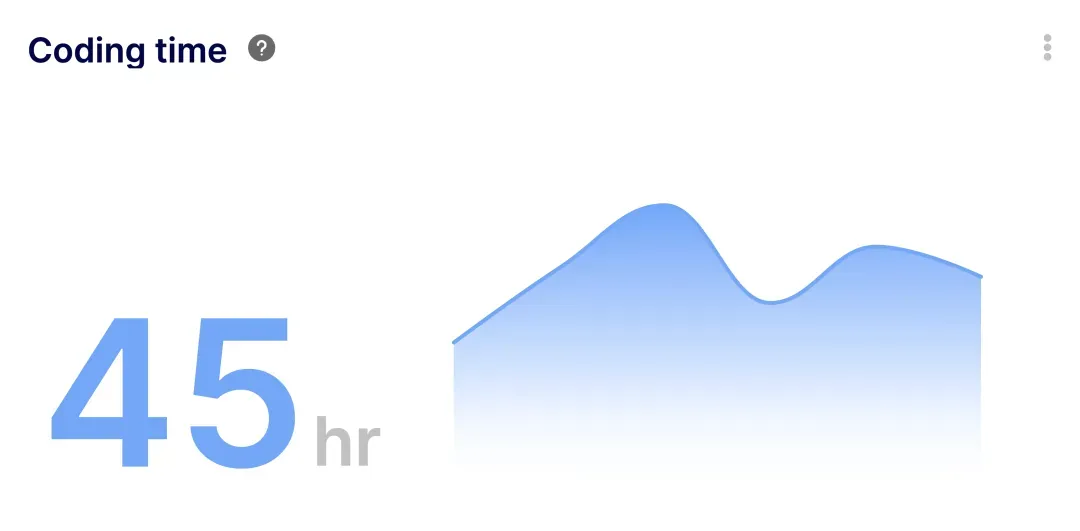

The Coding Time Metric

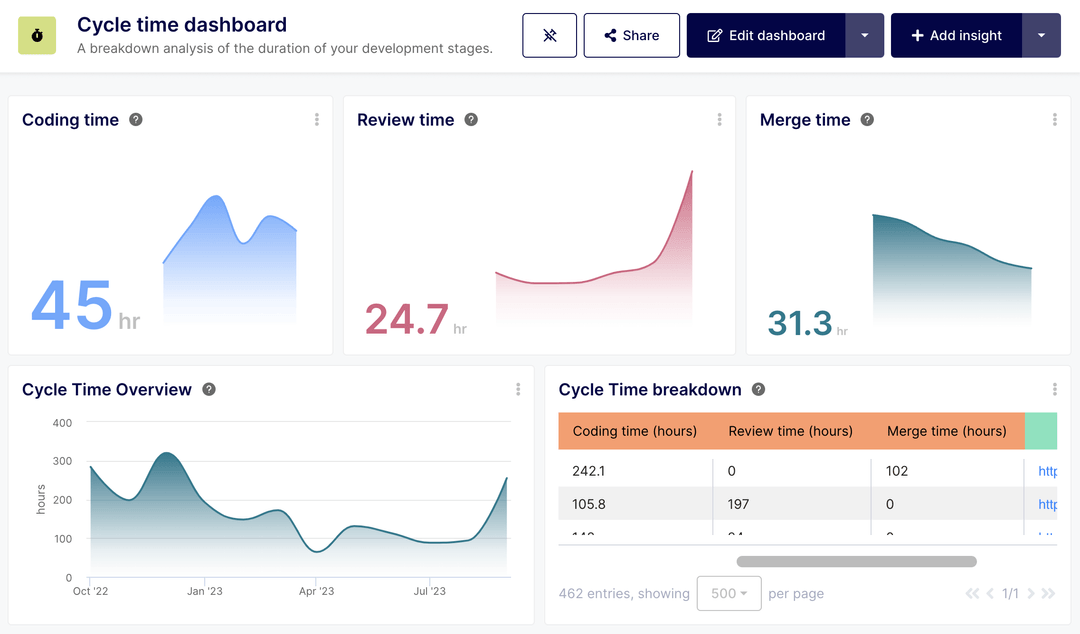

The Coding Time metric measures the time elapsed between start of development (either the first commit or PR creation date) and the review initiation. This time includes the coding time, the automated testing phase as well as the review pick-up time.

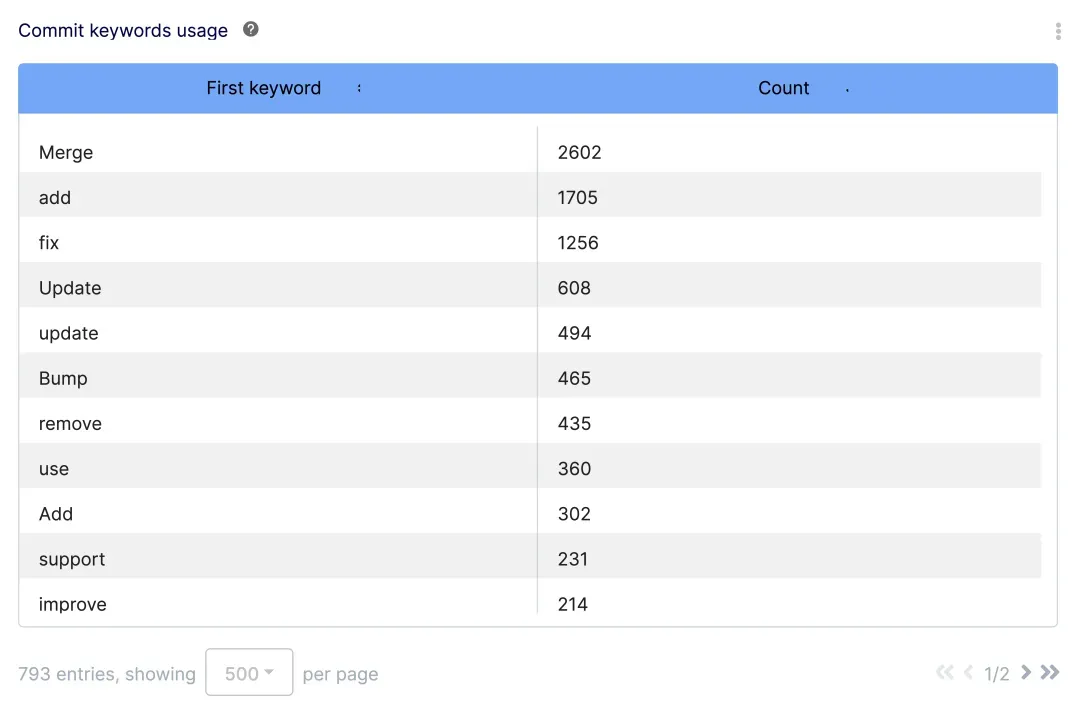

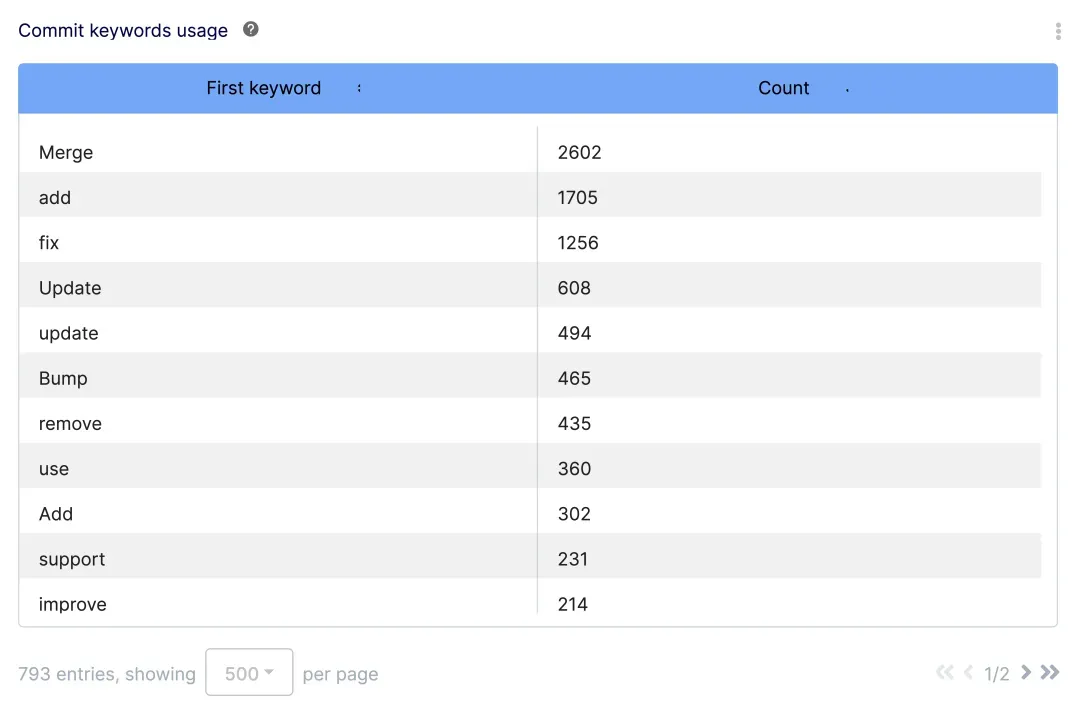

The Commit Keyword Usage Report

The Commit Keyword Usage Report provides an overview of the first keyword used in each commit message. This report can help classify reviews by priority and ensure proper naming conventions are followed across team members.

The Main Code Review (Manual Inspection)

Once the code is ready and submitted for review, the core phase of the review process begins:

- Assign reviewers: Based on the nature of the changes and the codebase's area, appropriate reviewers are assigned. These could be peers, senior developers, or domain experts.

- Review for logic and design: The reviewers evaluate the submitted code for its logic and design, ensuring it's efficient, clean, and follows established architectural patterns.

- Feedback and discussion: Feedback is provided, usually in the form of inline comments on specific lines of code or general comments about the entire set of changes. The developer and reviewers can then discuss, clarify doubts, and iterate on the feedback.

- Multiple rounds: Often, the code review process involves multiple rounds where the developer makes revisions based on feedback, and reviewers inspect the changes again until all parties are satisfied.

To monitor the main code review process, you can leverage:

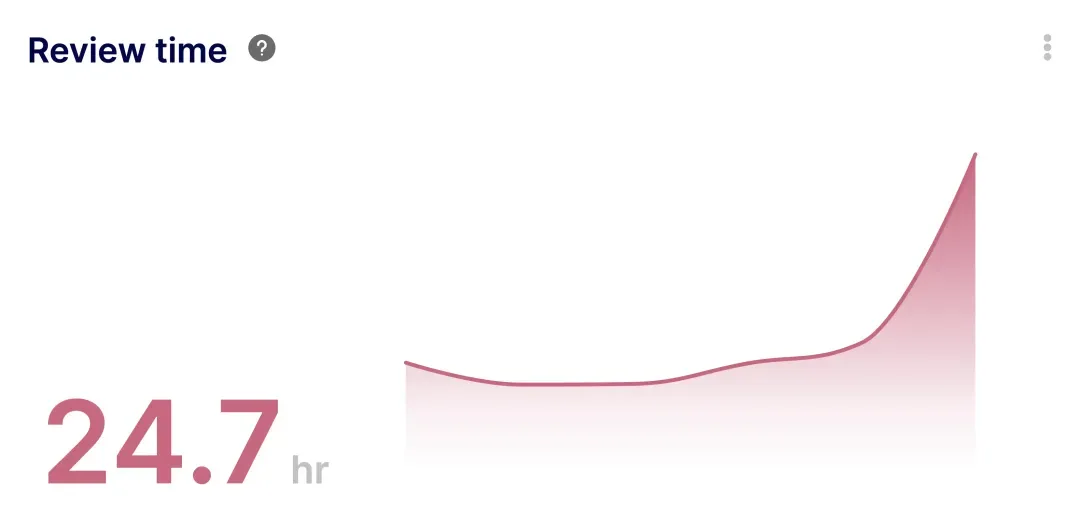

The Review Time Metric

The Review Time Metric calculates the time elapsed between the first submission review and the PR approval.

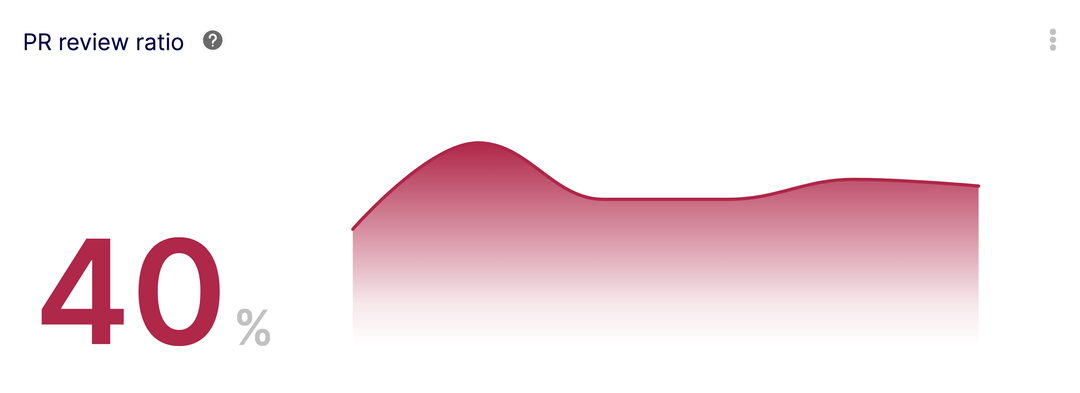

The Pull Request Review Ratio

With the Pull Request Review Ratio, you can ensure that all PRs get the minimum required reviews.

The Average PR Size Metric

With the Average PR Size Metric, you can track the volume of code line changes (deletions + additions) performed on PRs, ultimately isolating big PRs likely to cause delay in both coding and review time.

Post-Code Review Actions

After the review process is concluded:

- Merging: Once the reviewers approve the changes, the code can be merged into the target branch (often the main or master branch).

- Continuous integration: After merging, the code often goes through a continuous integration (CI) process where automated tests are run, and the application is built to ensure there are no integration issues.

- Follow-ups: Sometimes, during the review, certain topics might be flagged for further discussion or exploration but aren't deemed critical enough to block the current changes. These can be noted as "follow-up" items. They might result in new tasks, tickets, or further reviews in the future.

- Document learnings: Any significant insights, common pitfalls, or best practices that emerged from the review can be documented. This helps to refine the development and review process for future tasks.

Remember, the exact steps and tools in a code review process might vary based on the team's preferences, the nature of the project, and the tools in use. The essence, however, remains largely consistent: to collaboratively ensure that code changes are of high quality and align with the project's goals.

To monitor the post-code review process, you can leverage:

The Merge Time Metric

The Merge Time Metric calculates the average time elapsed between last review approval to the PR merged into a specific branch. It helps isolate the post-review cycle time, ensuring that approved PRs are not forgotten in the process.

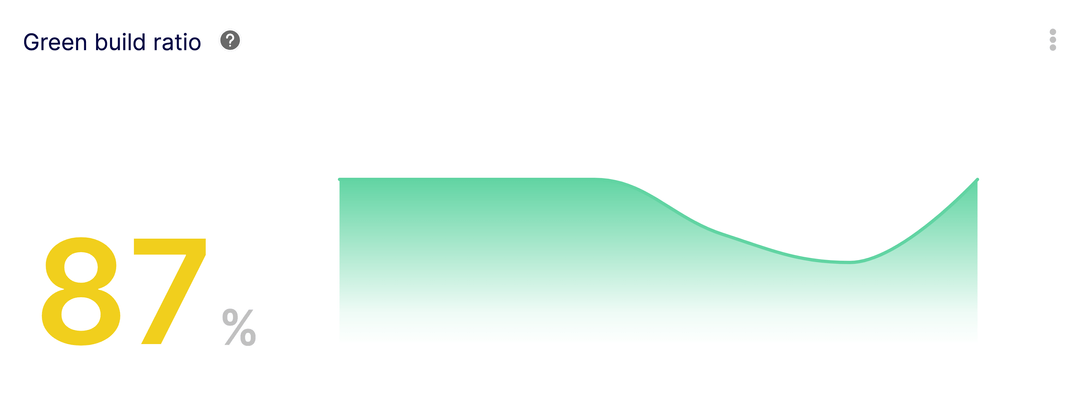

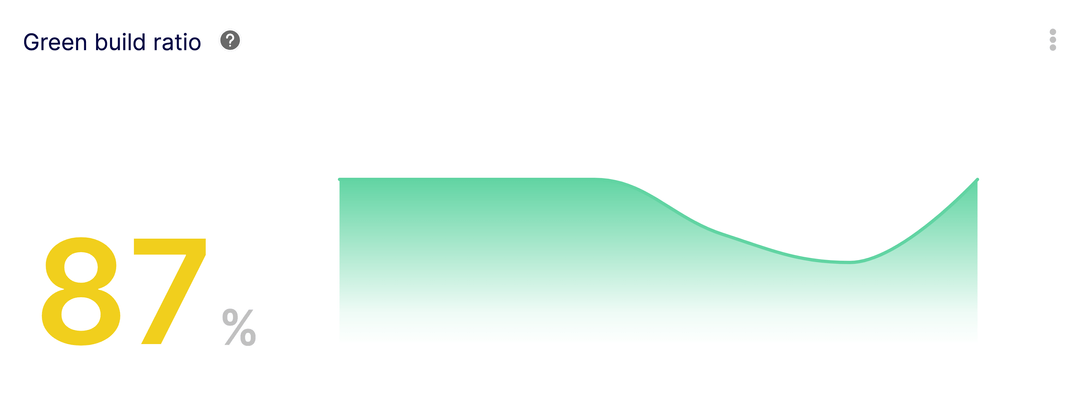

The Green Build Ratio Metric

The Green Build Ratio Metric helps ensure that the merged PRs have a green build status.

Code Review Best Practices

Adopting code review best practices is pivotal in cultivating a constructive, efficient, and collaborative software development environment, ensuring not just the quality of code but also fostering continuous learning and teamwork. Let’s dive into it.

Code Reviews Start with a Positive Tone

Why: Setting a positive tone encourages a collaborative and receptive atmosphere. It ensures that feedback is perceived as constructive rather than critical.

How: Begin your comments by acknowledging the effort or aspects of the code that you found well-done before pointing out areas of improvement.

Be Specific in Your Code Review Feedback

Why: Vague feedback can be confusing and might not lead to the desired improvements.

How: Point out specific lines or sections of code when giving feedback. Instead of saying, "This method is too complex," you might say, "Consider breaking down this method into smaller functions for better readability."

Limit the Scope of Code Reviews

Why: Extremely large code reviews can be overwhelming and decrease the chances of catching issues.

How: Encourage developers to make smaller, more frequent pull requests. If a large review is unavoidable, consider breaking the review into parts or sections.

Use a Standardized Checklist for Your Code Reviews

Why: A checklist helps ensure consistency across reviews and reminds reviewers of common issues to look for.

How: Create a list of items to check during every code review, like ensuring new methods have comments, checking for potential security vulnerabilities, or verifying the presence of tests for new features.

Prioritize Automated Tests Before Code Review

Why: Automating tests can catch a plethora of issues before human review, allowing the reviewers to focus on the logic and design.

How: Ensure that a CI (continuous integration) pipeline runs unit tests, integration tests, linters, and static analysis tools before the code is reviewed by humans.

Avoid Code Review Bikeshedding (Focus on the Big Issues)

Why: Engaging in prolonged debates about trivial matters can divert attention from more pressing issues and delay the review process.

How: Focus on the core logic, design, and potential impact of the code changes. If discussions start to veer off into minor style or preference debates, steer them back or defer those discussions for later.

Why: Timely feedback is more relevant and allows developers to address issues while the context is still fresh.

How: Allocate specific times during the day or week for code reviews. Using tools that notify reviewers when a review is requested can also help in timely reviews.

Always Provide Context for Your Code Review Feedback

Why: Context helps the author understand the reasoning behind the feedback, making it more actionable.

How: Instead of just pointing out an issue, explain why it's an issue. For instance, rather than saying, "Avoid using global variables," you might add, "Global variables can introduce unintended side effects and make the code harder to maintain."

Remember to Review the Code, Not the Coder

Why: Code reviews should be a critique of the work, not the individual. Making it personal can lead to defensiveness and conflict.

How: Frame feedback objectively. Avoid using personal pronouns. For example, say, "This method can be refactored for clarity," instead of "You wrote a confusing method."

Implementing these best practices can greatly enhance the effectiveness of code reviews, promote a positive and collaborative team culture, and ultimately lead to better software quality.

Common Pitfalls in Code Reviews and How to Avoid Them

Overly Critical Code Review

When a reviewer focuses solely on flaws and expresses feedback in a harsh or negative manner, it can demoralize the developer, breed defensiveness, and inhibit productive dialogue.

Do's:

- Start with positive feedback.

- Frame critiques as suggestions or questions.

- Use neutral language, focusing on the code and not the coder.

Don'ts:

- Avoid personal or pointed language.

- Don't make assumptions about the coder's intentions or capabilities.

- Avoid being overly pedantic on non-critical issues.

Skipping the Code Review Due to Time Constraints

Rushing through or completely skipping a code review due to time pressures can lead to undiscovered bugs, design flaws, or inefficiencies making their way into the production code.

Do's:

- Set aside dedicated time for code reviews in the development cycle.

- If pressed for time, prioritize reviewing critical components or areas with substantial changes.

- Encourage smaller, more frequent pull requests for easier reviews.

Don'ts:

- Don't make a habit of bypassing reviews.

- Avoid merging code without at least one review.

- Don't let "deadline pressures" compromise the quality of the codebase.

Not Checking for Potential Security Vulnerabilities During Code Review

Overlooking potential security issues during code review can lead to vulnerabilities in the application, risking data breaches, and other malicious attacks.

Do's:

- Familiarize yourself with common security vulnerabilities (like those in the OWASP Top 10).

- Check for proper data validation, authentication, and authorization mechanisms.

- Ensure sensitive data is protected, encrypted, or redacted.

Don'ts:

- Don't assume that external libraries or third-party code is always secure.

- Avoid leaving debug or test code that could expose sensitive data or functionality.

- Don't neglect potential red flags even if they seem minor.

While automated tools (linters, static analysis, etc.) are invaluable, they cannot catch every issue, especially those related to logic, design, and contextual understanding of the application.

Do's:

- Use tools as a first pass to catch common or straightforward issues.

- Always perform manual reviews to assess logic, design patterns, and application-specific nuances.

- Combine insights from tools with human judgment and expertise.

Don'ts:

- Don't blindly trust tool results without understanding their output.

- Avoid treating tools as a complete substitute for human review.

- Don't ignore tool warnings without valid reasons.

In essence, while code reviews are instrumental in maintaining and enhancing the quality of code, avoiding these pitfalls is essential to ensure that the process remains constructive, efficient, and effective.

Software Development Analytics to Monitor and Improve the Code Review Process

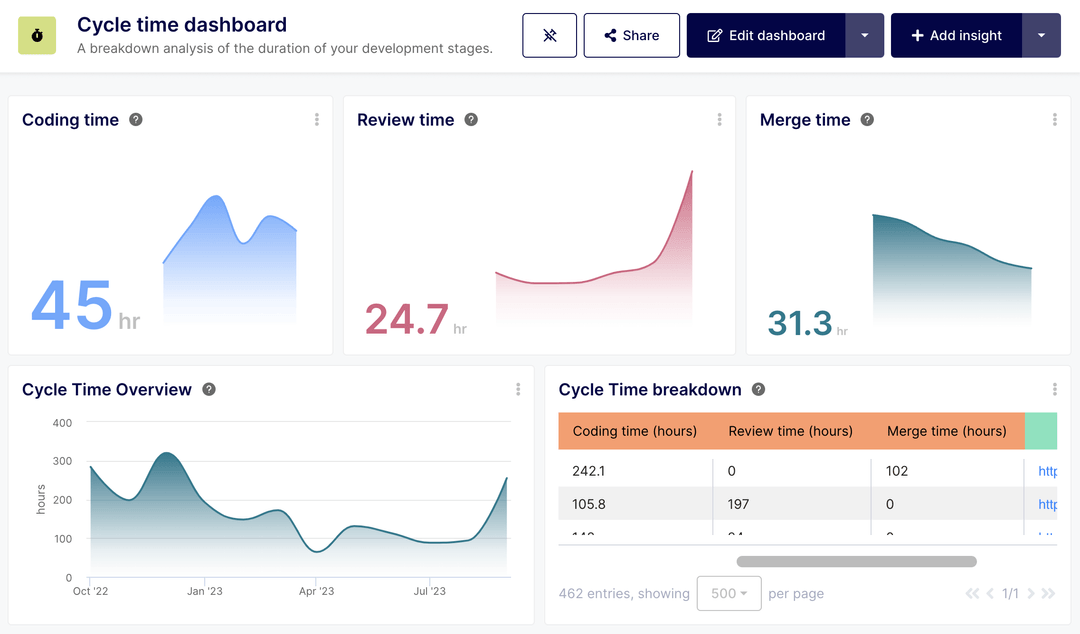

Tools like Keypup provide insights into the software development process, capturing metrics like cycle time, review time, reviews volume and frequency, pull request sizes, and more, to inform and improve development practices.

How to leverage Keypup for the code review process:

- Monitor key metrics to identify bottlenecks or inefficiencies in the development and review process by leveraging the Cycle Time Dashboard.

- Use historical data to track improvements over time and gauge the impact of changes to the development process.

Code collaboration tools such as GitHub, GitLab, or Bitbucket provide integrated environments for hosting code repositories, creating pull requests, and conducting code reviews.

How to leverage code collaboration tools for the code review process:

- Utilize pull request (or merge request) features to initiate code reviews.

- Make use of integrated comment systems for inline feedback.

- Use built-in integrations with CI/CD tools to automatically run tests before reviews.

- Take advantage of platform-specific extensions or plugins for enhanced review capabilities.

Linters and static analysis tools scan the codebase for syntactical errors, stylistic issues, potential bugs, and even security vulnerabilities without executing the code.

How to leverage linting and statics analysis tools for the code review process:

- Integrate these tools into the development environment or CI/CD pipeline to catch issues early.

- Customize rule sets based on the team's coding standards and guidelines.

- Use the tools as an initial filter before manual code reviews to focus on logic and design issues.

CI/CD tools such as Jenkins, Travis CI, CircleCI, GitHub Actions, and GitLab CI/CD, automate the building, testing, and deployment of applications, ensuring that the code integrates well and passes all tests before deployment.

How to leverage these tools in your code review process:

- Set up automated build and test pipelines to run whenever code is committed or a pull request is created.

- Integrate linting and static analysis tools within the CI process.

- Use CI results as a prerequisite for manual code reviews, ensuring only code that passes automated checks is reviewed.

- Implement automated deployment for code that passes both automated and manual reviews.

Incorporating these tools into the code review process not only streamlines the workflow but also ensures consistent code quality, reduces manual review burden, and fosters a culture of continuous improvement.

Concluding Thoughts: Measuring for Success in the Code Review Process

In the dynamic world of software development, code reviews stand out as an invaluable practice, enabling teams to collaboratively refine and enhance code quality. As we've explored, the right methodologies, tools, and a proactive approach can make this process effective and efficient.

However, it's crucial to remember that what gets measured gets improved. With platforms like Keypup, teams can gain actionable insights into their software development process, honing in on metrics that truly matter. By consistently monitoring cycle times, review durations, pull request sizes, and other key indicators, developers and managers can quickly identify and address bottlenecks, optimizing every phase of the Software Development Life Cycle (SDLC), including the critical code review step.

In essence, while tools, best practices, and collaboration play central roles, it's the strategic measurement and continuous improvement that truly set apart great development teams. As you embark on or refine your code review journey, always keep metrics at the forefront, ensuring that your efforts align with the overarching goals of delivering high-quality software in an agile and responsive manner.